Characterizing social media manipulation in the 2020 U.S. presidential election

- Authors: Emilio Ferrara, Herbert Chang, Emily Chen, Goran Muric, Jaimin Patel

- Publication, Year: First Monday, October 2020

- Link to Article

Characterizing social media manipulation in the 2020 U.S. presidential electionKey InsightsIntroData CollectionDescriptive Stats on DatasetTop 30 hashtags and mentionsTwitter unhashed banned user datasetBot DetectionCharacterizing User Political BiasAutomationTop 15 Hashtags Utilized by BotsTop 15 Hashtags Utilized by HumansBots in Campaign DiscourseRepublican Campaign-related HashtagsDemocratic Campaign-related HashtagsHashtags Utilized by Right-leaning Humans/BotsHashtags Utilized by Left-leaning Humans/BotsTweets Split by Party and BotnessRepublican Bots Change Tweeting Behavior Around DNCHuman-bot Interactions and Echo ChambersForeign Interference OperationsPolitical Bias of Foreign Interference AccountsPolitical Affiliation and Banned User InteractionDistortionThe Conspiracy TheoriesNine Popular ConspiraciesTop four tracked conspiracy theory hashtagsSelf-Reported Geographic Location of Q-Anon UsersConspiracies and Media BiasPolitical Ideology and Conspiracy EndorsementConspiracies and BotsHyper-partisan Media Outlets and Bots

Key Insights

Analyze over unique dataset of over 240 million election-related tweets recorded between 20 June and 9 September 2020

Focus on characterizing:

- Automation (i.e. bot behavior)

- Distortion (i.e. e.g., manipulation of narratives, injection of conspiracies or rumors)

Discover that bots exacerbate the consumption of content produced by users with their same political views, worsening the issue of political echo chambers.

They also discuss efforts carried out by Russia, China and other countries

Draw a clear connection between bots, hyper-partisan media outlets, and conspiracy groups, suggesting the presence of systematic efforts to distort political narratives and propagate disinformation.

Intro

- The authors are attempting to characterize social media manipulation on Twitter in the lead up to the 2020 election.

- Data covers 20 June to 9 September 2020 - specifically election related tweets.

- The period of observation includes several salient real-world political events, such as the Democratic National Committee (DNC) and Republican National Committee (RNC) conventions.

Using a combination of state-of-the-art machine learning technologies and human validation, we investigate a number of research questions pertaining to two signatures of manipulation:

- Automation —> evidence for adoption of automated accounts governed predominantly by software rather than human users

- Distortion —> of salient narratives of discussion of political events, e.g., with the injection of inaccurate information, conspiracies or rumors

Data Collection

A 600 million tweet data set — related to the election was gathered.

- Details on the paper where they make this dataset public can be found here

In this paper they focus on a smaller subset during a time period that is closer to the election

- 20 June to 9 September 2020

- Tracked tweets that mentioned any Republican or Democratic candidate’s official campaign or personal account

The data analyzed in this paper are tracking these politicians behavior on Twitter, as well as people who mention them in their tweets

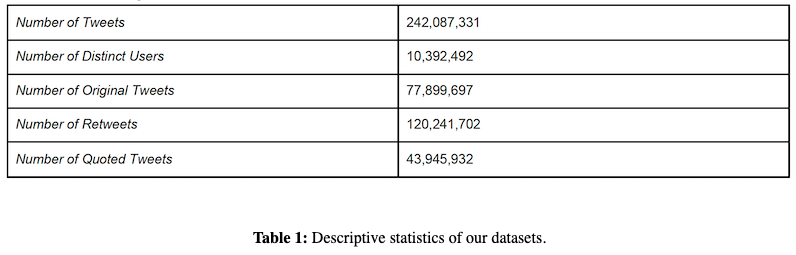

Descriptive Stats on Dataset

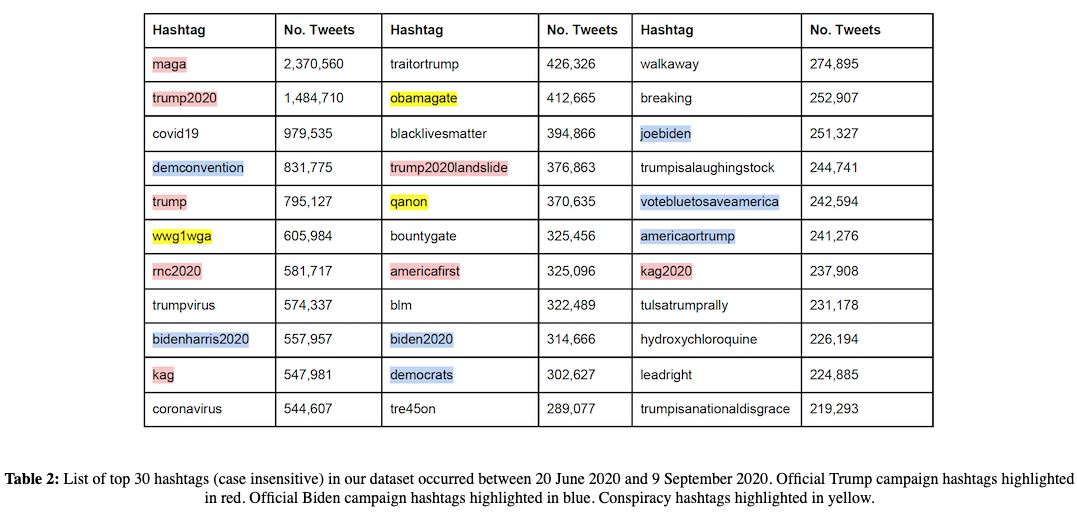

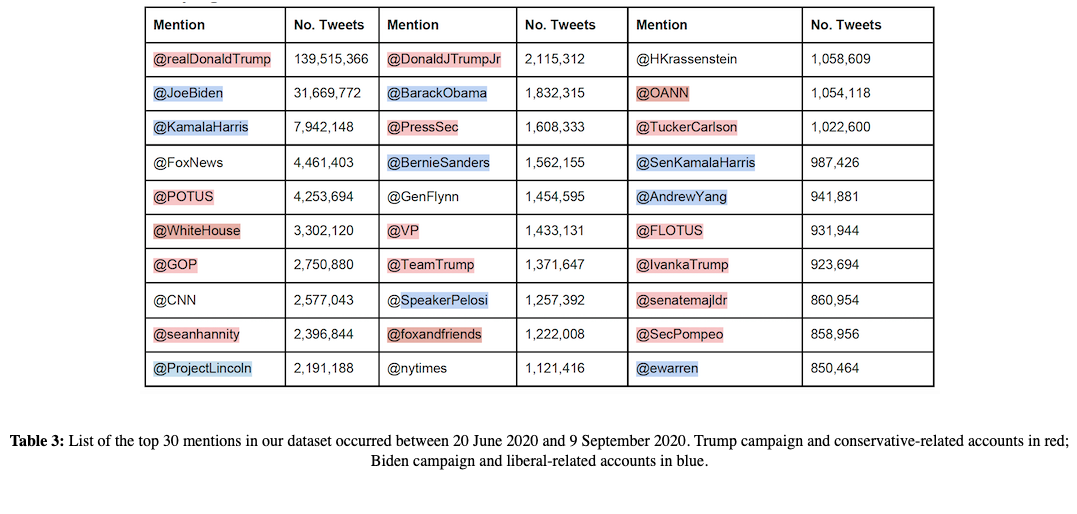

Top 30 hashtags and mentions

Twitter unhashed banned user dataset

As part of Twitter's Transparency Center initiative, a large set of banned accounts (backed by governments) were published by Twitter

Dataset can be found here: informations operation dataset

Countries included in the dataset:

- Saudi Arabia

- China

- Russia

- Turkey

- Honduras

- Indonesia

- Nigeria/Ghana

Each country is associated with two datasets:

The banned users including metadata

- General tweet information

The tweets of these users

- Screen_ID

- Follower count

- Targets of the banned accounts —> gathered by extracting all mentioned users by each banned account

Bot Detection

- Use Botometer V3 and V4

… use a conservative approach to classify bots as accounts that sit at the top end of the bot score distribution, rather than carrying out a binary classification of accounts into bots and humans

This addresses the problem of determining the nature of borderline cases for which detection can be inaccurate, and conversely allows to focus on accounts that exhibit clear bot traits. The results will be manually validated for accuracy.

Characterizing User Political Bias

Similar to prior work (Bovet and Makse, 2019; Badawy, et al., 2019), we identify a set of 29 prominent media outlets that appear on Twitter.

Using allsides.com non-partisan ratings, they categorize media outlets into the political categories (left, lean left, center, lean right, right)

- Endorsement of media outlet = retweet without comment

- Users' political bias is calculated as the "average political bias of all media outlets they endorsed"

Automation

Botometer V3 is utilized to calculate botscores for 32% of the accounts in their dataset

Botometer V4 is utilized for manual validation (via the web interface) and for examples in the paper

They look at only tweets sent by users they have botscores for and then split them into top and bottom deciles

- Top = bots (botscore range —> 0.485 to 0.988)

- Bottom = humans (botscore range —> 0.0144 to 0.0327)

- Note: Scores range from 0 to 1, where 1 is most likely a bot

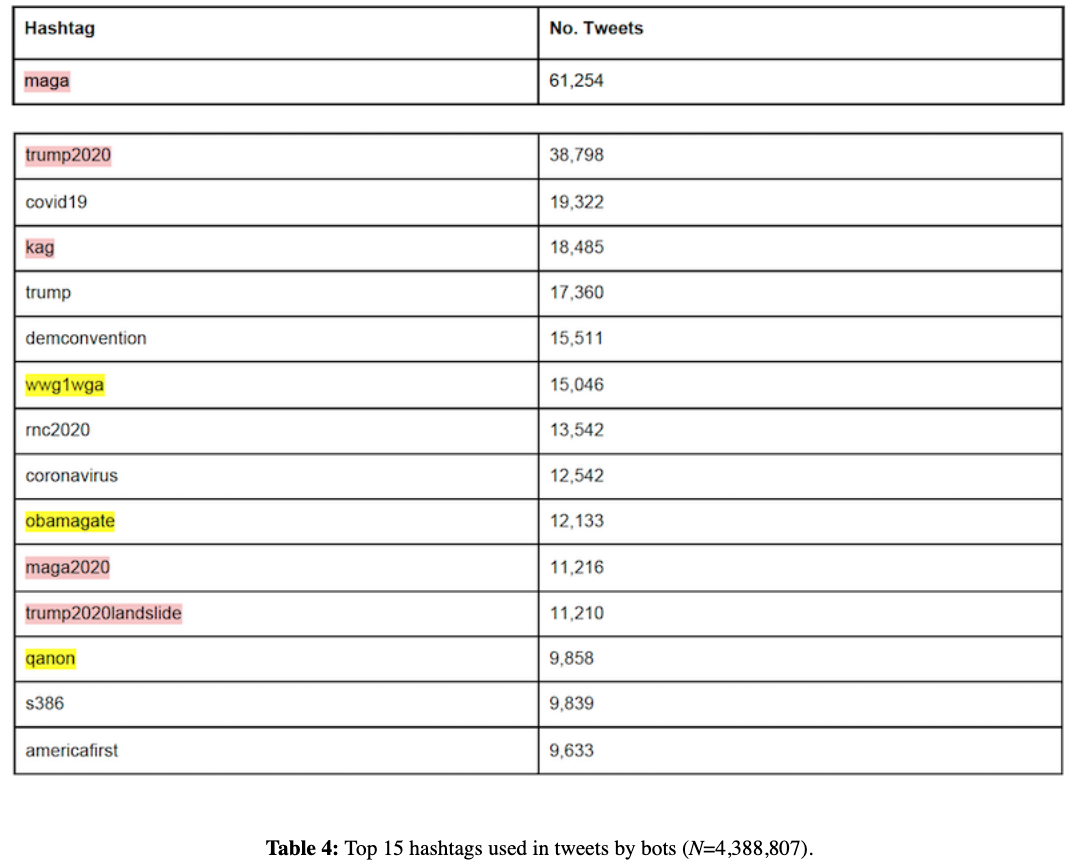

Top 15 Hashtags Utilized by Bots

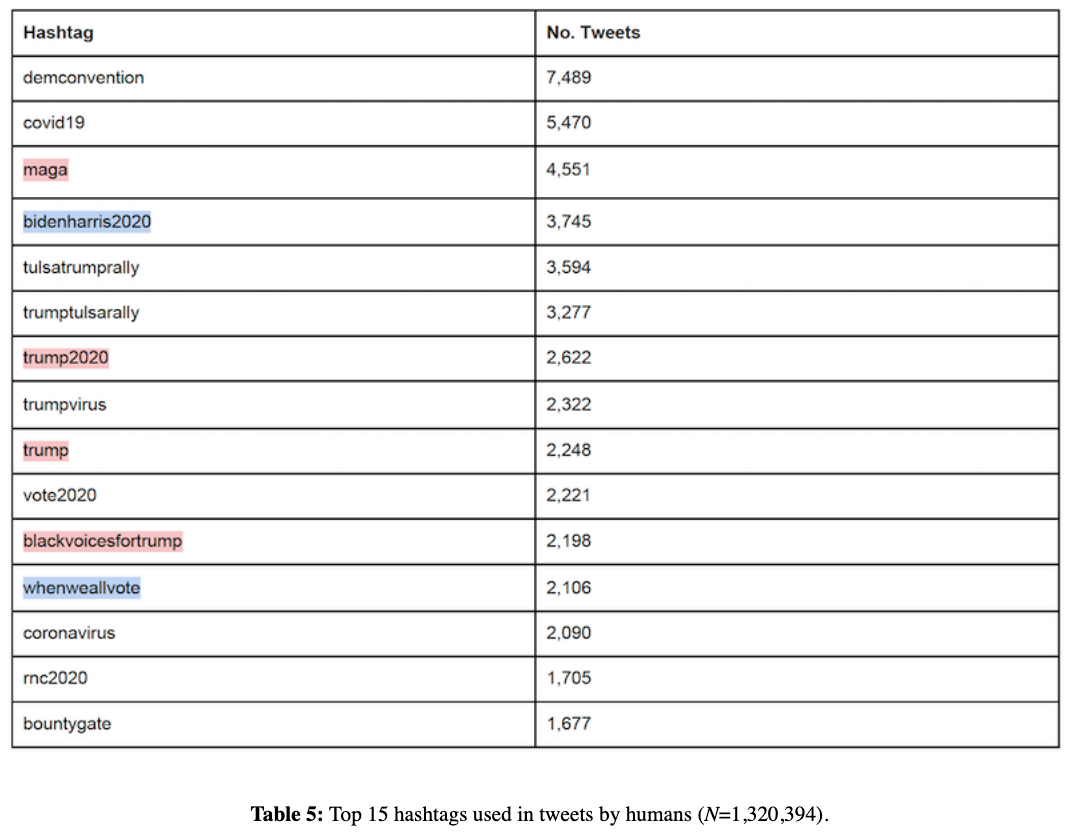

Top 15 Hashtags Utilized by Humans

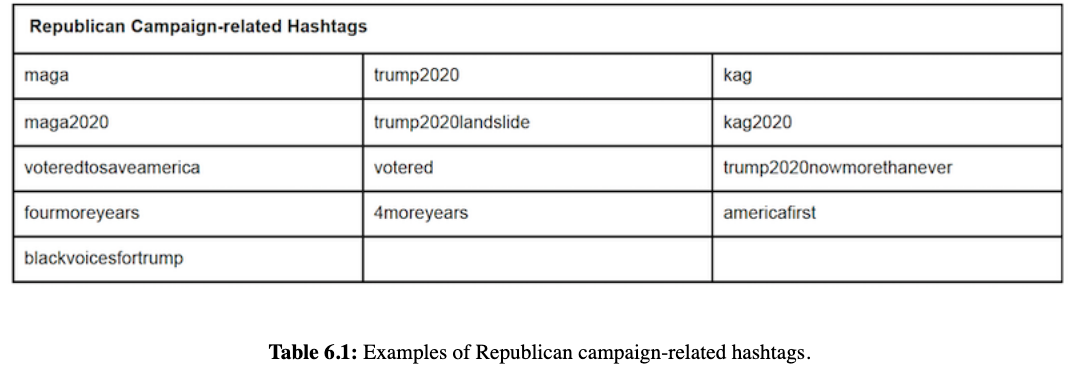

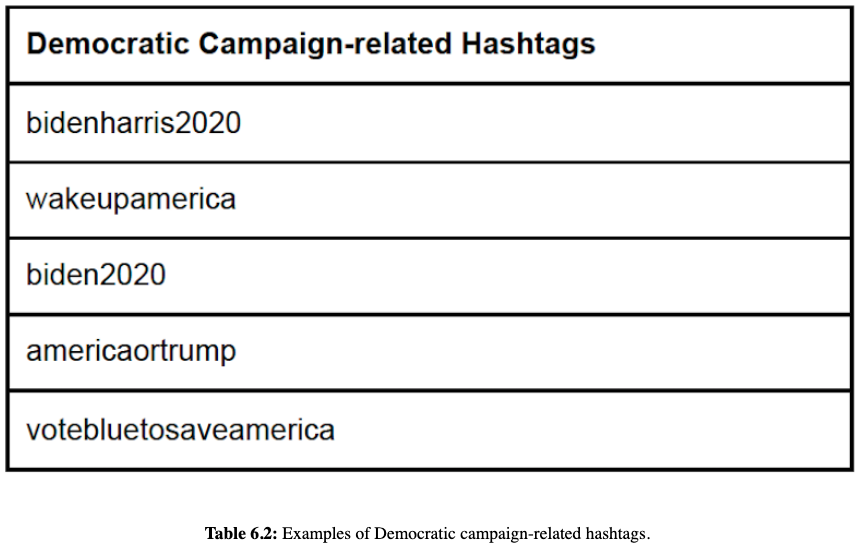

Bots in Campaign Discourse

- They identify the top 50 hashtags utilized by both bots and humans (the highest and lowest decile Botometer V3 scores — see Automation) that are specifically related to Republican and Democratic campaigns

- They utilize the idea behind these hashtags as a proxy for the political affiliation of the users

While we do recognize that there may be users who use these hashtags in tweets with opposing viewpoints, vast amounts of research in political polarization assert that that is relatively infrequent (Jiang, et al., 2020; Bail, et al., 2018). Hence, we selected hashtags that were most relevant to both campaigns, such as “trump2020” and “bidenharris2020”.

Republican Campaign-related Hashtags

Democratic Campaign-related Hashtags

- Examples of tweets from bots and humans can be found in the original publication here

- They manually inspected some tweets as validation for their above assumptions

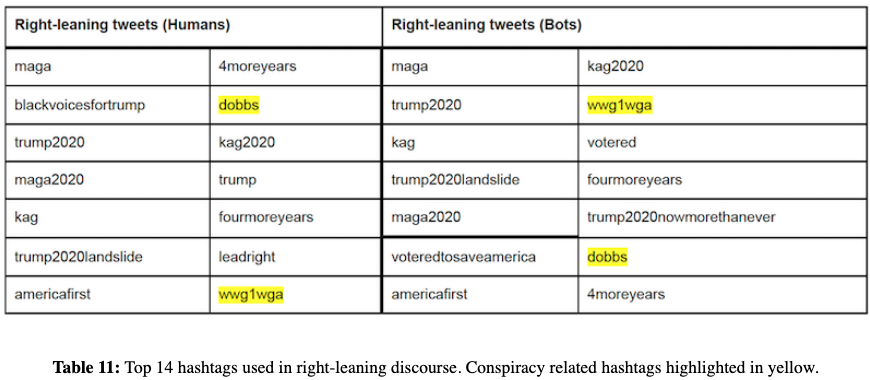

Hashtags Utilized by Right-leaning Humans/Bots

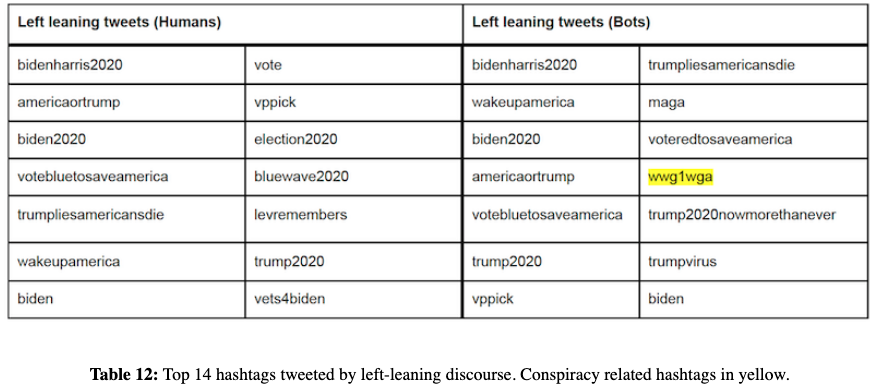

Hashtags Utilized by Left-leaning Humans/Bots

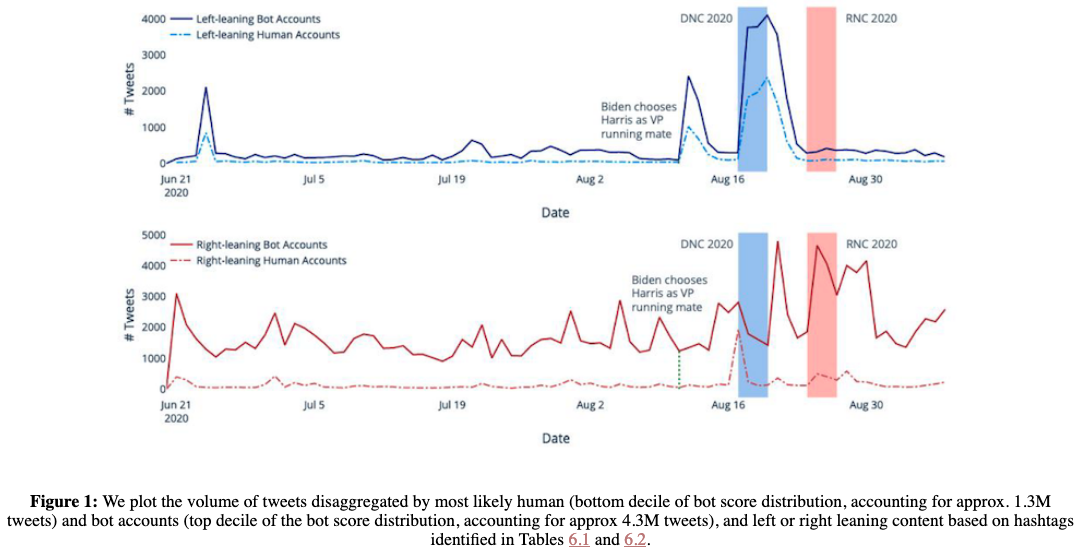

Tweets Split by Party and Botness

Bots are generally much more active, as we would expect.

Around specific events, the two volumes of activity become close and comparable

- Left-leaning discourse spikes after Biden chose Kamala Harris as his running mate on 11 August 2020

- In the aftermath of the Democratic National Convention (DNC) from 17–20 August is exhibited for both left- and right-leaning bots

A manual inspection was done to confirm that this surge was seen for both humans and bots alike.

The right-leaning discourse also exhibits similar phenomena surrounding the Republican National Convention that took place from 24–27 August

Interestingly, we also see right-leaning discourse increase in activity during the DNC

- They note, however, that the proportional increase in activity is much higher for users that fall in the bottom decile of Botometer scores

- To understand this increase, they take a look at changes in hashtags/keywords/bigrams/etc just before (Aug. 15-16) and after (Aug. 17-19) the DNC

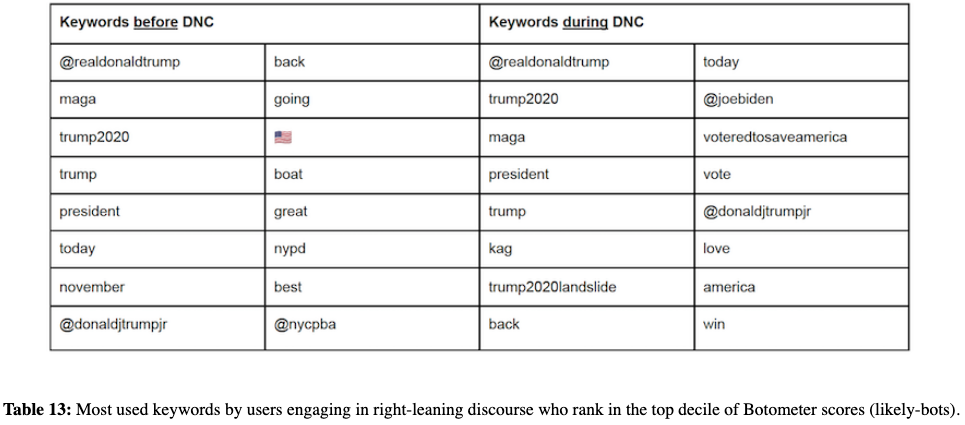

Republican Bots Change Tweeting Behavior Around DNC

Human-bot Interactions and Echo Chambers

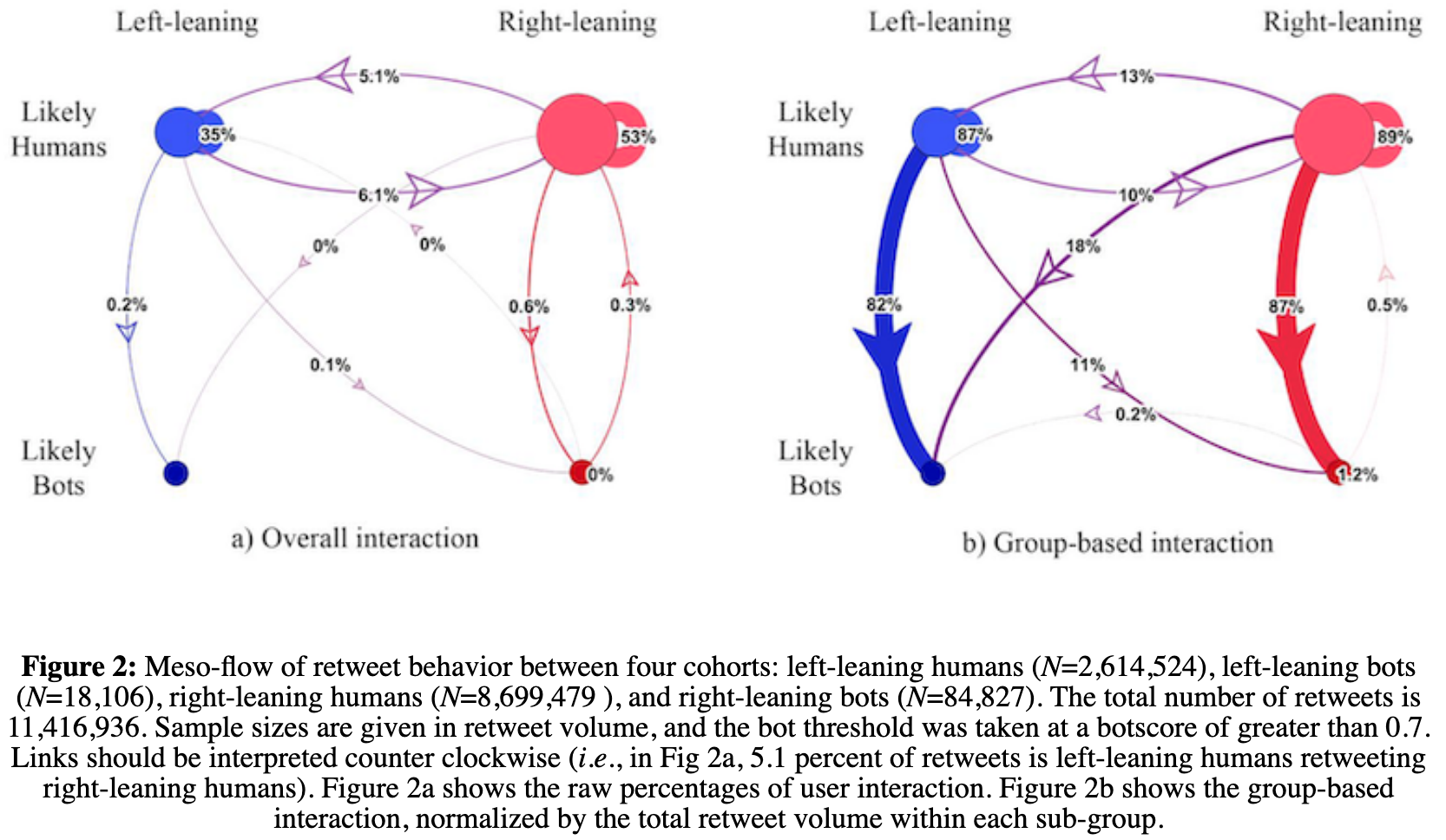

Bots almost exclusively retweet accounts that are humans.

This is consistent with:

- Prior observations that bots typically do not generate original content

- The intuition that they tend to borrow credibility by targeting visible human users and rebroadcasting their content.

There are proportionally more right-leaning bot accounts, at 4:1

Likely-humans — right vs. left accounts — are present in a 2:1 ratio

Of total retweets… (Fig. 2a)

- 35% are left-leaning humans retweeting other left-leaning humans

- 53% are right-leaning humans retweeting other right-leaning humans

Looking within group (Fig. 2b), however, we see clear political echo chambers

- Left-leaning users retweet other left-leaning users 87 % of the time

- Right-leaning users retweet other right-leaning users 89% of the time

This indicates a slightly higher propensity of within-cohort interaction for right-leaning users. Left-leaning users retweet around 13 percent across the aisle, whereas right-leaning users retweet 10 percent across the aisle.

Bots retweet both left-leaning and right-leaning users, but predominantly retweet from the same side of the aisle.

However,

- 18 percent of left-leaning bots’ retweets are from right-leaning humans

- 11 percent right-leaning bots’ retweets are from left-leaning humans

This indicates left-leaning bots have a more diverse retweet appetite than right-leaning bots do

Foreign Interference Operations

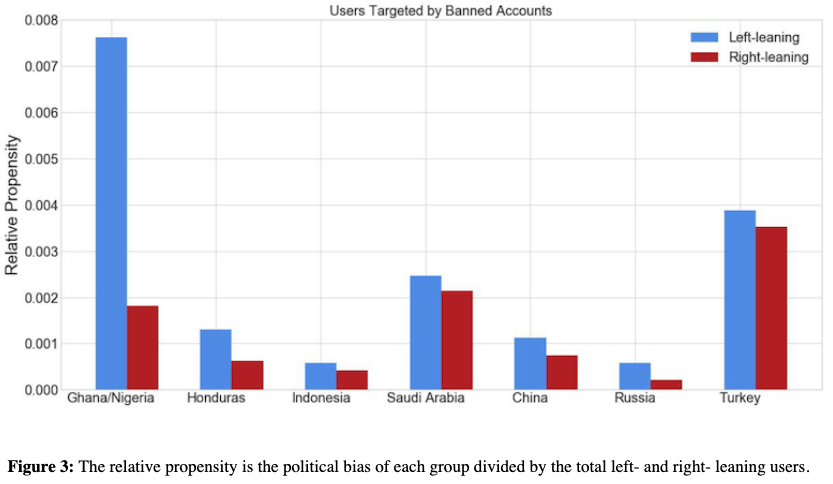

Looking at the Twitter data of foreign interference operations, where users are categorized by country, the authors determine the propensity of these accounts, by country, to be either left- or right- leaning politically. [Fig 3.]

- They do this by utilizing the accounts that those accounts had interacted with/retweeted (I'm assuming in the same manner as mentioned above)

Political Bias of Foreign Interference Accounts

Political Affiliation and Banned User Interaction

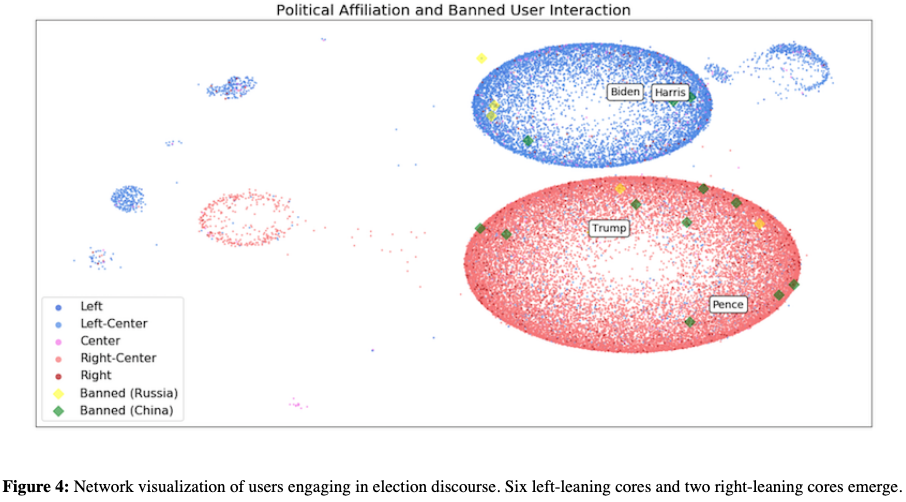

Next, they plot specific targets of banned users, and their relative position in the Twitter network.

Edges are weighted by the number of retweets or quoted tweets between users

Only include users who have:

Shared more than five politically oriented URLs and

Links with weights greater than 100

- Where weights convey the total amount of pairwise interactions between two nodes

Visualization was generated using the distributed recursive layout algorithm and the Force Atlas algorithm (Jacomy, et al., 2014)

Observations:

Roughly six left-leaning clusters (in blue) and two right-leaning clusters (in red).

- This indicates that right-leaning users are more tightly knit than left-leaning users are

We also show the position of banned Chinese-ops users (green diamonds) and Russian operations (yellow diamonds)

Since banned users often interact with Twitter celebrities, the users shown are ones exclusive to each cohort. That is, yellow diamonds are users who have only been associated with banned Russian accounts.

We also observe that Chinese state-sponsored users tend to interact with Republican users more.

Russian sponsored interactions also emerged outside of the right-leaning and left-leaning cores.

- Together, these suggest information operations are targeted toward specific communities based on partisan or ideological leanings

Distortion

In order to stay away from the "conundrum" of establishing veracity, the authors decide to focus on conspiracy theories.

Conspiracy theories are most likely false narratives, oftentimes postulated upon rumors or unverifiable information, that appear in social networks shared by users or groups with the aim to deliberately deceive unsuspecting individuals who genuinely believe in such claims (van Prooijen, 2019)

The Conspiracy Theories

They focus on three conspiracy theories:

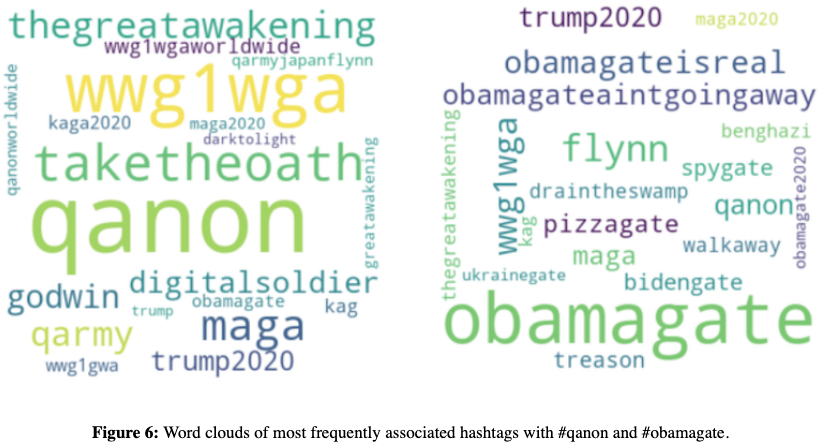

QAnon: A far-right conspiracy movement which has gained popularity in the run up to the 2020 election. This group’s theory suggests that President Trump has been battling against a satan—worshipping global child sex-trafficking ring and an anonymous source called ‘Q’ is cryptically providing secret information about the ring (Zuckerman, 2019).

- These users frequently use hashtags such as #qanon, #wwg1wga (where we go one, we go all), #taketheoath, #thegreatawakening and #qarmy.

“gate” conspiracies: Another indicator of conspiratorial content is signalled by the suffix ‘-gate’ with theories such as obamagate, an unvalidated claim against the Barack Obama’s officials that allegedly conspired to entrap Trump’s former national security adviser, Michael Flynn, as part of a larger plot to bring down the then-incoming president. Another example of “gate” conspiracy theory is pizzagate, a debunked claim that connects several high-ranking Democratic Party officials and U.S. restaurants with an alleged human trafficking and child sex ring.

Covid conspiracies: A plethora of false claims related to the coronavirus pandemic emerged recently. They are mostly about the scale of the pandemic and the origin, prevention, diagnosis, and treatment of the disease. The false claims typically go alongside the hashtags such as #plandemic, #scandemic or #fakevirus.

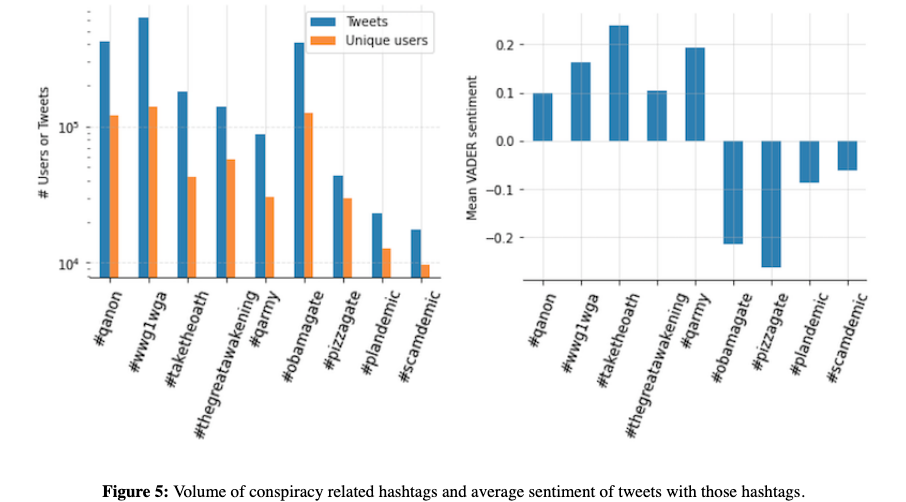

General Observations:

Q-Anon narratives have more highly active and engaged users (as measured by the ratio of tweet/unique users) than -gate narratives

- Most frequently used hashtag, #wwg1wga, had more than 600K tweets from 140K unique users

- #obamagate had 414K tweets from 125K users

- This suggests that the QAnon community using hashtags such as #wwg1wga has a more active user base

Using VADER (Valence Aware Dictionary and sEntiment Reasoner Hutto and Gilbert, 2014) the sentiment of hashtags are calculated as well

- Interestingly, the mean sentiment of QAnon associated tweets was much more positive when compared to the mean sentiment for ‘gate’ and Covid conspiracy theories, which are negative

Nine conspiracy narratives are broken down below ([Fig. 5])

Nine Popular Conspiracies

- Examples of these popular hashtags can be found via the original text here

Word Clouds of co-occurring hashtags were utilized to find additional hashtags that were popular and to further investigate.

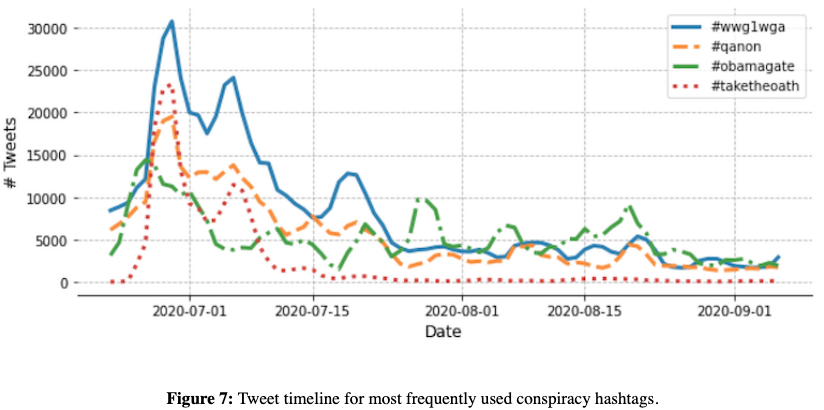

Top four tracked conspiracy theory hashtags

We see that conspiracy theory hashtags tend to drop off in mid-July, this is likely due to Twitter engaging in a takedown of over 7,000 QAnon associated accounts.

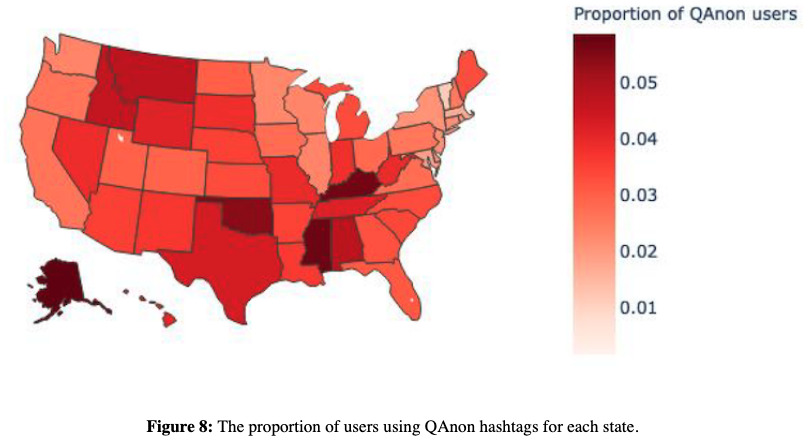

Self-Reported Geographic Location of Q-Anon Users

57.6% of users report a location in their Twitter profile...

- Below is that representation for Q-Anon Users in the U.S.

Conspiracies and Media Bias

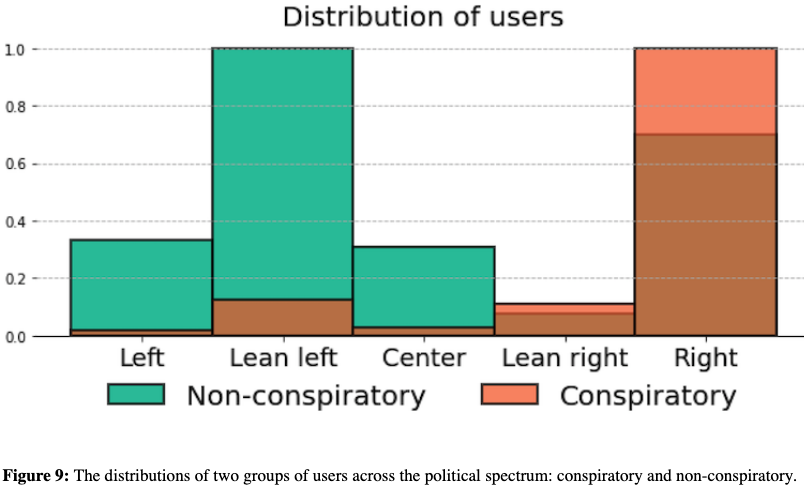

They then look at whether left vs. right users share conspiratorial narratives differently

Users are considered "endorsing" a narrative if they retweet a tweet with a conspiracy hashtag

Users are given a score from 0 to 1, which represents the proportion of their endorsements which are conspiratorial narratives

Users are then dichotomized into two groups:

- Conspiratory

- Non-conspiratory

This binary classification is based on a threshold value t:

- All users who have a conspiratory score larger than t will be classified as the conspiratory users

- The threshold value t is calculated as a median value of all positive conspiratory scores

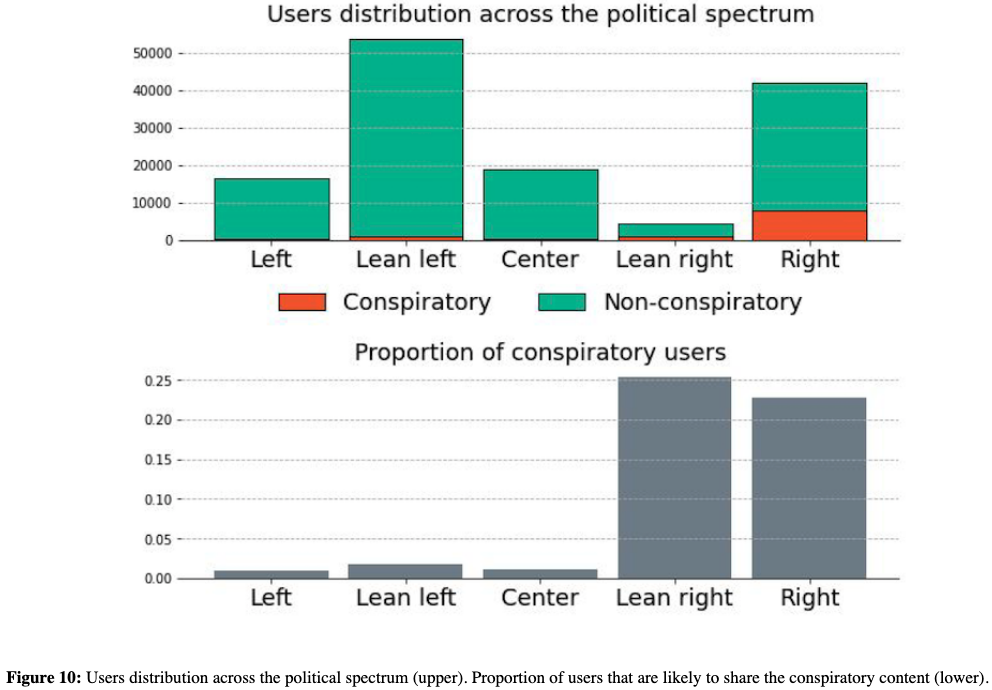

Political Ideology and Conspiracy Endorsement

Groups are significantly different

Conspiratory users tend to skew to the right

- Suggests that the users who are prone to share the conspiratory narratives are likely to endorse the right-leaning media.

The non-conspiratory users, those unlikely to share the conspiratory narratives, are distributed more equally across the political spectrum with significant proportions of them on left and center of the spectrum.

Two-sided t-test performed both on a continuous (t=5.17) and discrete data (t=7.5), confirms that two distributions are significantly different with p<0.005.

Note: I believe the two shades of red/brown are supposed to be the same color (conspiratory). This distinction in color is not mentioned in the publication at all so this is my own assumption.

- The top graph in Figure 10 represents the number of users as opposed to the proportion of users (seen in Figure 9 above)

- The bottom graph shows the proportion of conspirators users who fall within each political category that users were bucketed into

Almost a quarter of users who endorse predominantly right-leaning media platforms are likely to engage in sharing conspiratory narratives. Out of all users who endorse left-leaning media, approximately two percent are likely to share conspiratory narratives.

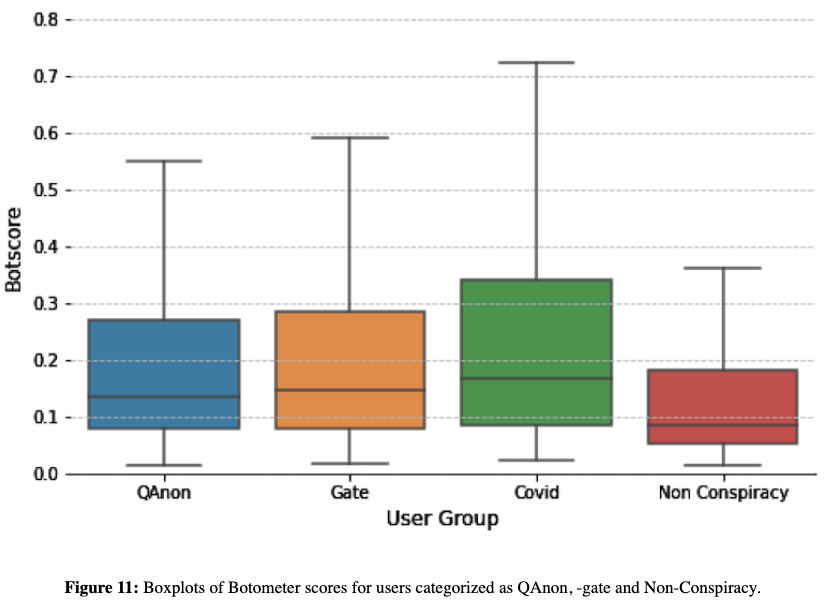

Conspiracies and Bots

The main question [the authors] seek to answer is: are bots used to target groups and how do they push conspiracy narratives with news related media?

We compare the botscores of four groups

- QAnon (27K users)

- -gate (21K users)

- Covid (3K users)

- non-conspiracy (30K users)

Non-conspiracy user = someone who did not use any of the tracked conspiratorial hashtags

A user who uses conspiratorial hashtags can be assigned to multiple conspiracy groups if they use keywords from more than one category

We can see that Q-Anon and -Gate communities are quite similar

- Of the -gate users, 55 percent are identified as also sharing QAnon hashtags

Covid has the highest level of bots and, (thankfully) the non-conspiracy community is much less bot-like than the others

- Of the Covid community, 78 percent use QAnon hashtags

This overlap suggests that users who share conspiracy related content are prone to adopting multiple conspiracy narratives and that the communities are highly connected.

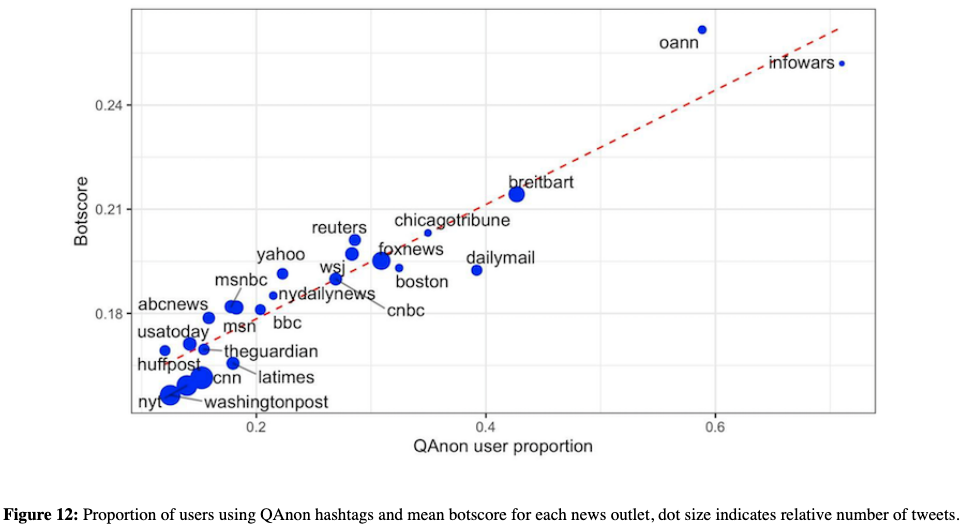

Hyper-partisan Media Outlets and Bots

The authors also investigate the proportion of users sharing URLs from these news Web sites who have also used QAnon hashtags at any point in our dataset

Hyper-partisan news outlets like One America News Network (OANN) and Infowars are outliers but see the greatest proportion of their user base tweeting QAnon material

- These two outlets also have the highest bot scores, but the volume of tweets that contain these Web site URLs is fairly low.

Left-leaning news outlets such as the New York Times and Washington Post have a low Botometer score and proportion of QAnon users, but the volume of tweets mentioning these URLs is much (approx. 29 times) larger.

The proportion of users using QAnon keywords is highly correlated with the average Botometer score (correlation coefficient: 0.947) across the spectrum of left, right and neutral outlets.

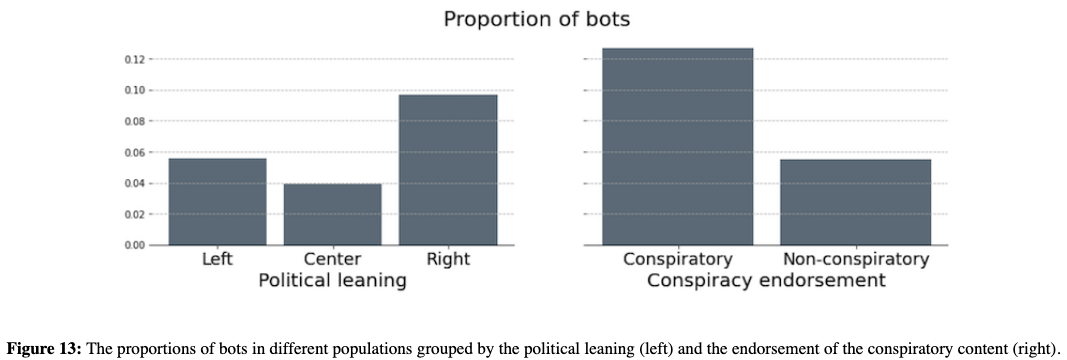

Also explore the proportion of bots and compare it to their political leaning and usage of conspiratory language.

Bots appear across the political spectrum and are likely to endorse polarizing views.

The smallest fraction of automated accounts is among the users who endorse centric media outlets (4%).

A larger proportion of automated accounts that endorse right-leaning media outlets.

- Almost 10% of all users who share content from right-leaning media are most likely automated, compared to less than six percent of users who share left-leaning news.

By performing a t-test on the distributions of bot scores they confirm that the differences between the pairs (left-center, center-right and right-center) are all significant with p<0.005.

The proportion of bots varies in between users who are likely to share conspiratory narratives and those who are not.

Almost 13% of all users that endorse conspiracy narratives are likely automated.

- This is significantly more than accounts who never share conspiracy narratives, where only five percent of them are likely to be automated (significant with t=4.4 and p<0.005).

It is possible that such observations are in part the byproduct of the fact that bots are programmed to interact with more engaging content, and inflammatory topics such as conspiracy theories provide fertile ground for engagement (Stella, et al., 2018). On the other hand, bot activity can inflate certain narratives and make them popular. The automated accounts that are a part of an organized campaign can purposely propel some of the conspiracy narratives, further polarizing the political discourse.

Notes by Matthew R. DeVerna